Not signed up to our monthly newsletter? You can sign up for free here.

Artificial Intelligence refers to “systems that allow computers or machines to perform or mimic human thinking” (Narang, 2019). AI has been a part of radiology for many years, and anyone with a digital x-ray will be familiar with it. Most of us have also come across AI in cardiac ultrasound, whether it’s the automated calculation of ejection fraction (auto EF) on systems like the Apogee 2300, automated spectral Doppler tracing on the higher end Edan systems, or strain packages on Philips and GE machines.

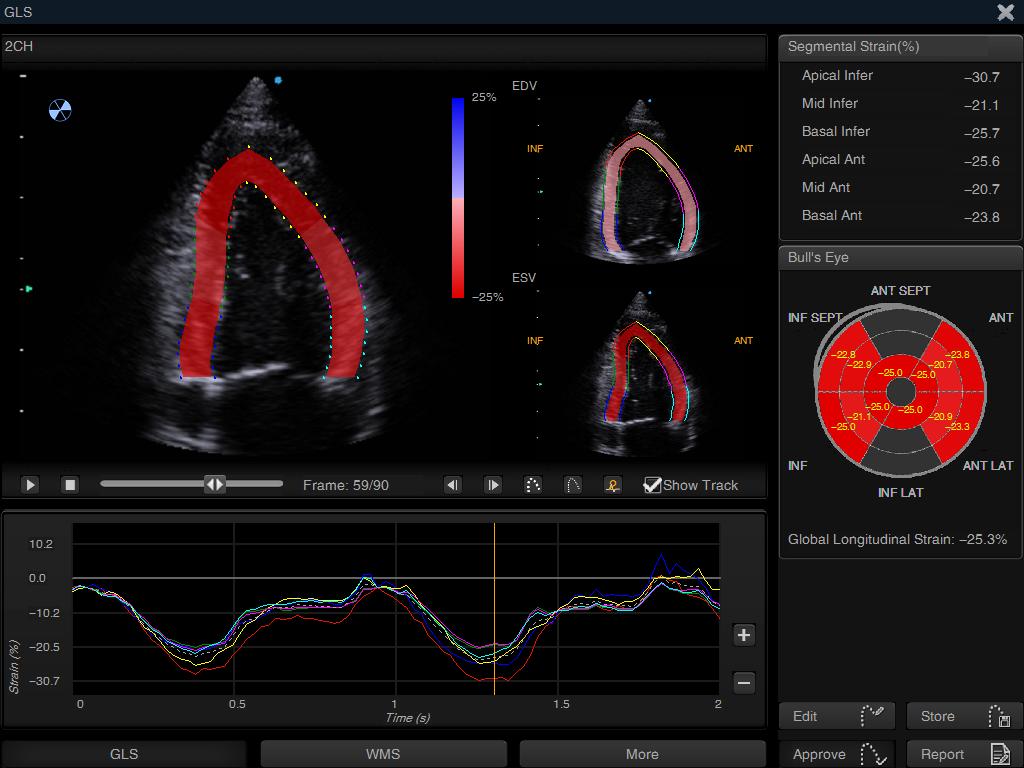

Above: Strain analysis, automatically performed on the Siui Apogee 2300. This same software package can also generate an ejection fraction automatically, as long as the user is skilled enough to obtain the required two orthogonal views.

Machine learning is a further evolution of AI and allows the computer to continually improve from its ‘experiences’, without explicit instruction from a human. In echocardiography, this usually takes the form of reinforcement learning rather than completely unsupervised learning, with the computer making inferences based on the sonographer’s acceptance or rejection of the computer’s analysis. When performing a strain measurement on the left ventricle, for example, one runs the automated endocardial detection but will frequently adjust one or two points. The current commercially available systems (both on-cart as in the Siui or GE cardiac systems, or offline like Tomtec) do not ‘learn’ anything from this – they employ artificial intelligence, but not machine learning. A system using machine learning would adjust its algorithm based upon the feedback of the user, and improve for next time.

The application of AI in echocardiography has been held back by the fact that, in echo, we rely far more on cine loops than we do on still images. This is a problem that has begun to be addressed, and the potential of AI in echocardiography is particularly exciting due to the operator-dependent nature of the modality, which can often undermine confidence in its results.

Of all the areas of ultrasound, echo is arguably the most challenging to master, and the level of training and experience of the sonographer makes an enormous difference to measurements. AI removes this level of variability, making echo more reproducible. This doesn’t necessarily mean that you will agree with the computer’s measurement every time; in fact, you almost certainly won’t, because in any group of experts, the AI should perform to the average of that group. In most instances, however, it should be close enough that it needs no or very minimal input from you. For beginners, AI guidance and analysis should give them the confidence to pick up the probe and image the heart, unhindered by the fear that they won’t be able to interpret their images.

Benefits of AI:

At Imperial College, our research group is currently working on ways in which AI can be used to guide novice ultrasound users to obtain a series of captures which the computer can analyse to rule serious pathology in or out. This would have clear utility in the acute setting, where a cardiologist is not always on hand. In the veterinary world it could aid in cardiac screening both in the emergency setting and in detecting obvious, serious pathology (such as severe systolic dysfunction, HCM or mitral valve disease). This topic was discussed in detail in December’s newsletter.

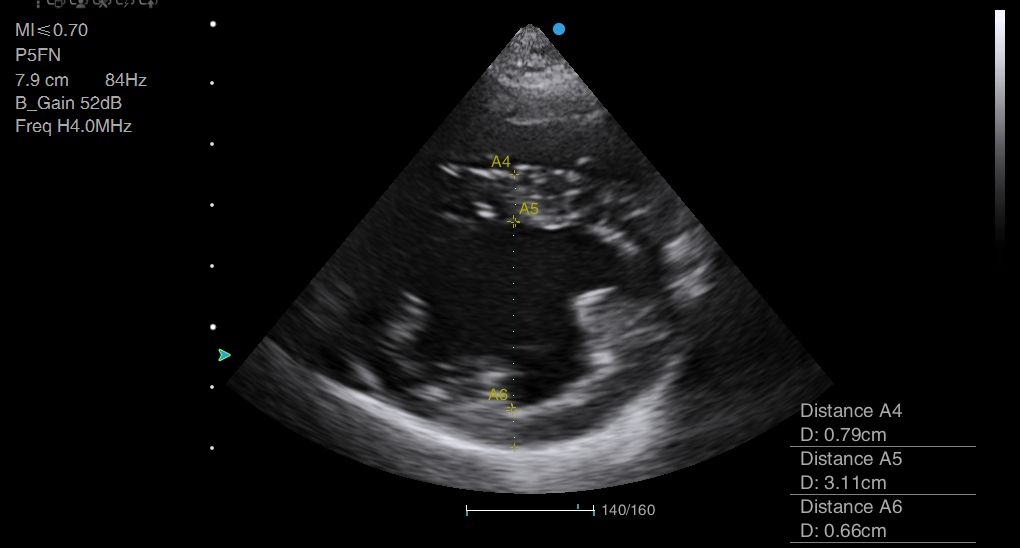

Above: Wall thickness and cavity diameter measurements can be time-consuming to perform, and could be very easily automated.

References

Narang, A., 2019. Artificial Intelligence and Echocardiography. American College of Cardiology, June 18, 2019.

© 2025 | AUA – All Rights Reserved